AI and vishing: when your voice becomes a threat

Cyber threats are constantly evolving, adapting to new technologies to target their victims with formidable efficiency. Among these threats, vishing, or voice phishing, stands out for its ability to manipulate individuals through voice.

While this technique has been around for many years, the use of artificial intelligence (AI) in these attacks is a game changer, making fraud more convincing and harder to detect.

The emergence of AI allows cybercriminals to automate calls, exploit personal data to create customized scenarios, and even impersonate voices in a very realistic way.

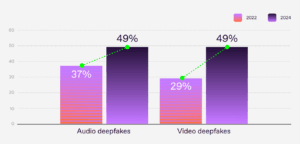

According to a study published by Regula, 49% of global companies faced identity theft through audio deepfakes in 2024, compared to only 37% in 2022.

The percentage of companies that detected audio and video deepfakes in 2022 and 2024. Source: Regula

Technological advances and the growing links between AI and vishing only amplify the impact of voice phishing attacks, which target individuals as well as businesses, government agencies, and even associations.

AI and vishing, deepfakes and deepvoices: overview and definitions

Deepfake: AI used for fraud

A deepfake, a contraction of "Deep Learning" and "Fake," is a technology that uses artificial intelligence to create or modify visual, audio, or video content, realistically imitating faces, voices, or gestures.

It allows a face or voice to be superimposed onto another medium to simulate situations that never happened.

Audio deepfakes are sometimes referred to as deepvoices. This technology focuses specifically on synthesizing and imitating the human voice. Using deep learning models, deepvoice systems can analyze voice samples to clone them in an extremely convincing way.

These techniques offer opportunities, particularly in the music and medical fields. However, they also raise ethical and security concerns, particularly with regard to cybersecurity and, more specifically, voice phishing (vishing).

What is vishing?

Vishing (also known as "voice phishing") is a form of telephone fraud in which cybercriminals pose as trusted contacts in order to obtain sensitive information.

Unlike email phishing, vishing relies on voice calls. But in both cases, social engineering methods are used to deceive victims by exploiting their emotions (fear, trust, sense of urgency, etc.).

Attackers generally pose as representatives of well-known companies, banks, or government agencies to lower their target's guard.

The goal of vishers (cybercriminals who practice vishing) is to trick victims into disclosing confidential data. This may include usernames, passwords, banking information, or access to internal systems.

This form of cybercrime is particularly dangerous because it has an alarmingly high success rate. This is especially true when AI and vishing are combined through the use of deep voice technology.

How does Deepvoice work?

In recent years, we have seen the development of software using speech synthesis technologies. These enable realistic voices to be generated using artificial intelligence.

These platforms offer tools for creating personalized voices, imitating existing voices, or generating dynamic narrations in multiple languages. They are used in content production, education, entertainment, accessibility solutions, and more.

Even though these companies theoretically prohibit the fraudulent use of their tools, in reality there are few safeguards in place.

According to research conducted by McAfee, it takes only 3 or 4 seconds of voice recording to successfully clone a voice using tools available on the Internet.

Even with free tools, researchers were able to reproduce a voice that was 85% faithful to the original. With more sophisticated tools and more raw material, the fidelity rate rises to 95%.

Access to deepvoices has therefore become very simple and no longer requires any special technical skills. According to Recorded Future, cybercriminals even offer their own voice cloning services for a fee.

AI and vishing: Open sesame

Deepvoice to facilitate initial access

To compromise a system through a vishing attack, a cybercriminal needs initial access. This usually involves the hacker calling the victim directly. Sometimes the target receives an email asking them to call the criminal back, for example, under the pretext of resolving a technical issue.

In all cases, the phisher plays a role and impersonates someone who will inspire trust in the victim (bank advisor, IT technician, government official, etc.).

The use of a deepvoice facilitates initial access. The ability to impersonate not only a role, but also a voice associated with that role, greatly facilitates gaining the victim's trust.

This is especially true when the attack also uses spoofing, a relatively simple technique that allows hackers to impersonate a phone number.

By pretending to be a superior, a colleague, or even a personal banking advisor, the visher does not have to convince the person they are talking to of who they are. They immediately recognize a familiar voice.

This makes it easier to persuade the target to carry out a financial transaction, grant remote access to the computer system, or transmit sensitive data.

Lateral movement and privilege escalation

In vishing, an attacker may use two techniques to achieve their goals: lateral movement and privilege escalation.

Lateral movement involves an attacker moving within a compromised system to other internal resources. This allows them to expand their access and target critical data or systems.

Privilege escalation, on the other hand, allows attackers to obtain higher privileges. The attacker can then access sensitive data or modify settings that were not accessible to them initially.

However, an attack combining AI and vishing facilitates deep intrusion into computer systems.

In practical terms, it is generally easier to deceive an employee working at a switchboard than an IT network administrator. The latter is more aware of the techniques used by cybercriminals.

Thus, a visher can easily record the voice of a caller directly or retrieve recordings found on the network following initial access.

He can then train a deepvoice model, enabling him to engage in credible interactions with other targets within the organization. He will then gain access to increasingly sensitive systems and data.

Where do phishers find their sources of information?

Advances in communication technology make it easier for vishers to collect voice samples without even having to infiltrate a network beforehand.

In fact, more and more people are recording their voices on the Internet. This can be done via videos posted on social media or via voice messages exchanged on messaging apps.

According to the McAfee study cited above, 55% of French people record their voice at least once a week. Some of these recordings are public, while others could become public following a data leak.

When it comes to business leaders, filmed conferences or interviews can serve as a source for malicious individuals. Recording their voice on their voicemail to invite people to leave a message can simply be another source.

With the development of AI, this resource can enable hackers to very easily impersonate a person's voice to carry out an attack directly at the highest level.

The combination of AI and vishing: a present and future threat

When reality catches up with science fiction

According to the McAfee study, 11% of French people have already been directly confronted with a vishing attempt involving voice spoofing, and 16% know someone to whom this has happened.

However, globally, 36% of adults surveyed say they have never heard of this risk. The threat is therefore both very serious and underestimated.

Furthermore, according to the Regula study cited in the introduction, more than 85% of companies consider identity theft through audio or video deepfakes to be a threat that must be taken into account.

The report also indicates that companies are more concerned about the negative effects on their reputation than the financial losses associated with attacks. This probably explains the discretion of the companies affected and the fact that this issue is not receiving more media coverage at the moment.

Several cases of victimized companies

There are numerous cases of individuals falling victim to scams using AI and vishing, particularly in the United States. The Federal Trade Commission even points to vishing as the most dangerous attack in terms of average financial losses.

When it comes to businesses, examples are harder to find, mainly because of their lack of communication in the event of an attack. However, a few cases have been made public in recent years.

In 2019, the head of a British energy company fell victim to vishing, transferring €220,000 to a fraudulent account after receiving a purported phone call from his CEO in Germany. The attacker used deepvoice technology to perfectly mimic the voice and German accent of the person whose identity was being stolen.

Worse still, in early 2024, an employee of a multinational corporation was tricked by a fake videoconference in which all the participants were animated by deepfakes. The result: $25 million was transferred in good faith by the duped employee.

A similar case had already been reported in 2020, with $35 million stolen from a Japanese company.

These few examples clearly demonstrate the potential of attacks combining AI and vishing. What's more, it's important to remember that the worst is probably yet to come and that no company can consider itself immune.

Attacks already dangerous despite technical limitations

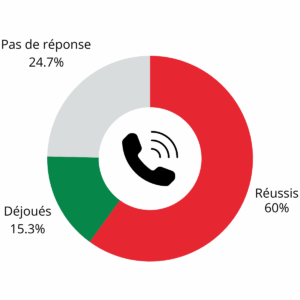

A study conducted in 2024 bythe Polytechnic School of Quito simulated a vishing campaign based on consumer voice cloning software.

The results are alarming, as on average 60% of those contacted passed on sensitive information to their correspondent. Only 15% of targets thwarted the attack.

Results of vishing tests conducted by the National Polytechnic School of Quito on 150 calls in a university setting.

However, the various studies cited highlight the limitations of deepvoice technologies. For example, AI models have difficulty pronouncing complicated words correctly or imitating unusual voices or expressions.

However, it is likely that these limits will soon be exceeded and that developments will enable even more sophisticated attacks.

Deepvoice live: a future scourge?

The use of real-time voice cloning is a worrying development to consider. It will allow hackers to interact directly with their target.

Currently, vishers create recordings prior to their attacks. They then play them back once they are online with their victim. If the victim deviates from the script, the attack is likely to fail.

To counter this limitation, hackers work on the scenario to anticipate the questions their targets might ask. They thus avoid interactions as much as possible, as explained by a specialist in a conference available online.

However, a French newspaper recently reported a disturbing account of an attack in which the scammer used a live deepvoice. A son who thought he was talking to his mother on the phone was able to avoid the trap by noticing inconsistencies in the responses of his supposed mother. This was the first case reported in France, according to Cybermalveillance.gouv.fr.

We therefore know that it is only a matter of time before AI enables us to use live calls and even videos in a sufficiently convincing manner.

How can you protect yourself against attacks combining AI and vishing?

The right reflexes and procedures to put in place

Fighting vishers is no easy task, as technology is constantly evolving and hackers always stay one step ahead. However, there are a few procedures and technical solutions that can protect you from attacks combining AI and vishing.

1. Implement multi-factor authentication (MFA) to access critical information or perform banking transactions.

2. Implement verification procedures to confirm a person's identity before responding to sensitive requests over the phone (call back to a verified number, voice password, voiceprint recognition, etc.).

3. Use only secure corporate chat rooms for all sensitive conversations and do not grant any exceptions to this procedure.

4. Use authentication and call blocking technologies to filter incoming calls.

5. Ask the person specific questions that only someone who really knows you would be able to answer.

6. Be alert to inconsistencies in the other person's speech (unusual pauses, changes in background noise, changes in tone, etc.).

7. Avoid sharing information if you have any doubts about the person you are talking to.

8. Report suspicious calls to the IT security department and your coworkers to prevent anyone else from falling into the trap.

These protective measures help reduce the risk of falling into the vishing trap. However, the list provided is not exhaustive, and defense technologies are constantly evolving, as are attack technologies. Against this relentless advance, the best solution remains to raise awareness and test users.

Raise awareness and test with a vishing simulation

The best weapon vishers have is their victims' ignorance. Someone who is aware of the risks is much more likely to avoid falling into a trap.

Raising awareness and communicating with family members and colleagues helps to increase their vigilance against attempts at manipulation.

This approach helps establish a culture of cybersecurity within organizations, where each person becomes an active link in the protection of sensitive systems and data.

Nevertheless, to assess an organization's level of risk in relation to vishing, the best approach is to conduct a simulation (vishing simulation) to test employees' reflexes in real-life conditions. This then allows procedures to be adjusted based on the recommendations resulting from the simulation.

Check if your organization is prepared to counter a vishing attack with AvantdeCliquer.

Conclusion

Artificial intelligence is transforming the landscape of cyber threats, and vishing is a striking example of this. For individuals, businesses, associations, and public bodies, "vigilance" and "prevention" must become the watchwords.

Investing in awareness campaigns and vishing simulations, adopting appropriate technological solutions, and strengthening internal procedures are all ways to protect yourself.

The fight against growing threats combining AI and vishing requires collective mobilization and constant adaptation to new technological realities.

These risks, although sometimes still in their infancy, require increased vigilance and proactive measures to anticipate and counter their potential development.

FAQ: AI and vishing

1. What is vishing?

Vishing is a form of telephone fraud in which cybercriminals pose as trusted contacts. Their goal is to manipulate victims into providing sensitive information or access to systems. Unlike traditional phishing (via email), it relies on voice calls.

2. What is Deepvoice?

Deepvoice uses AI to clone human voices realistically. By analyzing a few seconds of a voice sample, the software can create synthetic voices that are close to the original. Cybercriminals can then imitate relatives, colleagues, or company executives to enhance the credibility of their attacks.

3. How do cybercriminals collect voice samples?

Voice samples are often collected via:

- Social media (public videos or audio recordings).

- Professional recordings (conferences, interviews).

- Data leaks from various platforms.

- Recordings captured or stolen following initial access to the system.

4. Is vishing a growing threat?

Yes. With advances in artificial intelligence, vishing attacks are becoming increasingly sophisticated and difficult to detect. Vigilance and prevention are essential to counter this growing threat.

5. What are the risks for businesses when it comes to vishing?

Companies are exposed to several risks, including:

- Disclosure of sensitive data, such as information provided by customers.

- Compromise of the computer system with data deletion or encryption.

- Financial losses related to fraudulent transfers.

- Damage to the organization's reputation.

6. What are the best practices for avoiding a vishing attack?

- Always verify the identity of the person you are talking to before providing sensitive information.

- Do not respond to urgent requests without double-checking.

- Be wary of inconsistencies in the speech or behavior of the person you are talking to.

- Report any suspicious calls to your security department or the appropriate authorities.

7. How can you protect yourself against attacks combining AI and vishing?

Here are some measures to reduce the risks:

- Set up multi-factor authentication (MFA).

- Use strict identity verification procedures.

- Limit the publication of voice samples on public platforms.

- Use tools to block and detect suspicious calls.

- Raise employee awareness of the risks of vishing.

- Test defenses with a vishing simulation.

8. What is a vishing simulation?

A vishing simulation is a controlled scenario designed to test an organization's ability to recognize and counter vishing attacks. It helps identify vulnerabilities, raise employee awareness, and improve security procedures.

9. DoesAvantdeCliquer offer vishing simulations?

Yes, theAvantdeCliquer team has been an expert in raising awareness of all forms of social engineering attacks since 2017. We can help you test the resilience of your organization and your employees to a vishing attack. Contact us to find out more.